Constructor Tech joins forces with SAPE & SPF to empower private education

November 27, 2024

On November 27, 2024, SAPE (Singapore Association for Private Education), in collaboration with the Singapore Police Force (SPF), hosted an impactful seminar at Constructor Tech's office in Singapore. This event brought together over 30 attendees from the private education sector—directors, student affairs officers, and counselors—to explore ways of fostering safe and supportive educational environments. Dmitriy Istomin also participated in the seminar, sharing perspectives on how AI can support data-driven approaches in education.

With datasets growing exponentially, managing data has become a bottleneck. From inconsistencies and unstructured formats to manual errors, researchers often spend more time cleaning and organizing data than analyzing it. AI, with its ability to process and unify vast datasets, eliminates these inefficiencies.

Statistics are the backbone of rigorous research, yet many researchers struggle to select and apply the correct methods. The fear of misinterpretation often limits the depth and breadth of their analysis. AI bridges this gap by recommending the most suitable techniques based on the dataset’s structure.

Manual validation methods are reactive, time-consuming, and error-prone. Studies reveal that traditional data remediation processes catch only 9% of errors, leaving significant inaccuracies undetected. This introduces bias and risks undermining the reliability of research findings.

Research doesn’t end with data analysis; effectively communicating results is just as important. Whether addressing academic peers, policymakers, or industry stakeholders, researchers need clear, impactful presentations. However, turning complex data into meaningful narratives is a challenge many face.

“We use Constructor Research to unify data produced by different research groups, run machine intelligence on the dataset, and accelerate our research as a result.”

Data is the lifeblood of modern research, but managing it effectively remains a significant challenge for researchers across disciplines. Whether in the natural sciences, social sciences, or humanities, the volume, complexity, and quality of data often determine the success of a study. Yet many researchers are left wondering: "Is my data good enough? Is my methodology sound?" The stakes are high. According to a 2020 Gartner report, poor data quality costs organizations an average of $12.9 million annually, impacting productivity, decision-making, and compliance. Furthermore, between 2008 and 2020, global banks alone paid $321 billion in fines, often due to data quality issues. These numbers highlight the transformative potential of robust data management solutions. Artificial intelligence (AI) offers a groundbreaking approach to data interpretation and validation, empowering researchers to harness the full potential of their datasets, validate methodologies, and communicate findings effectively. Let’s explore how AI is transforming the research landscape.

This year, the transformative power of artificial intelligence and machine learning (AI/ML) was celebrated with Nobel Prizes awarded to pioneering researchers:

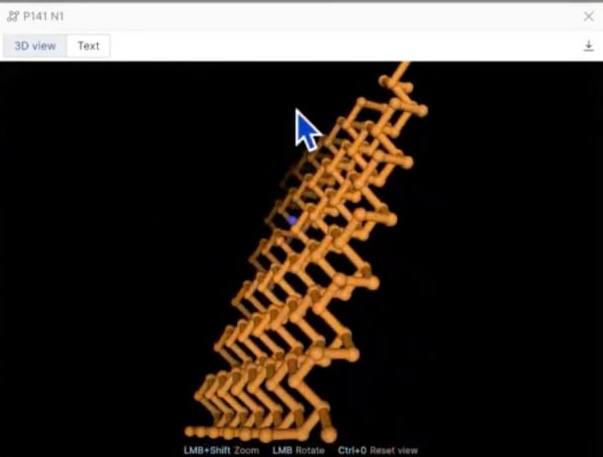

Physics Nobel Prize: Awarded jointly to John Hopfield and Geoffrey Hinton for their foundational discoveries and inventions that enable machine learning with artificial neural networks. Hopfield developed associative neural networks, known as Hopfield networks, which have been instrumental in advancing AI. Hinton, often referred to as the "godfather of AI," made significant contributions, including the development of the Boltzmann machine, which have been pivotal in the evolution of deep learning.

Chemistry Nobel Prize: Awarded jointly to Demis Hassabis and John Jumper for their work on protein structure prediction using AI. Their development of AlphaFold, an AI program capable of predicting 3D protein structures with high accuracy, has revolutionized computational biology and has significant implications for drug discovery and understanding diseases.

AI is redefining research by streamlining processes, enhancing accuracy, and amplifying impact. Tools bridge the gap between data complexity and actionable insights, empowering researchers to drive discoveries across disciplines and tackle pressing global challenges with confidence.